My trip to California last week had already brought me to both Dolby and DTS to experience new home theater product demos. Knowing that I’d be in L.A., however briefly, my friend (and occasional Roundtable contributor) Chris from Big Picture Big Sound clued me in to the existence a third audio-themed conference that I might want to make time for. After some last-minute schedule adjusting, I was able to squeeze in a presentation for SRS Labs’ new Multi-Directional Audio (or “MDA”) format.

What’s SRS Labs, you may ask? Admittedly, the company doesn’t have the name recognition value of a Dolby or DTS. However, it’s a pretty big player in the area of virtual surround sound and volume-adjusting processors. Its technology is built into many HDTVs, A/V receivers, cell phones and more. (One of the company’s slogans is: “You’ve heard us. But you may not have heard of us.”) Some of our readers may recall that I reviewed the SRS Volume Leveling Adaptor last year. Although I wasn’t much impressed with that product’s glitchy performance, I could chalk that up to one bad experience and not necessarily hold it against the entire company. I was more than willing to attend this presentation with an open mind.

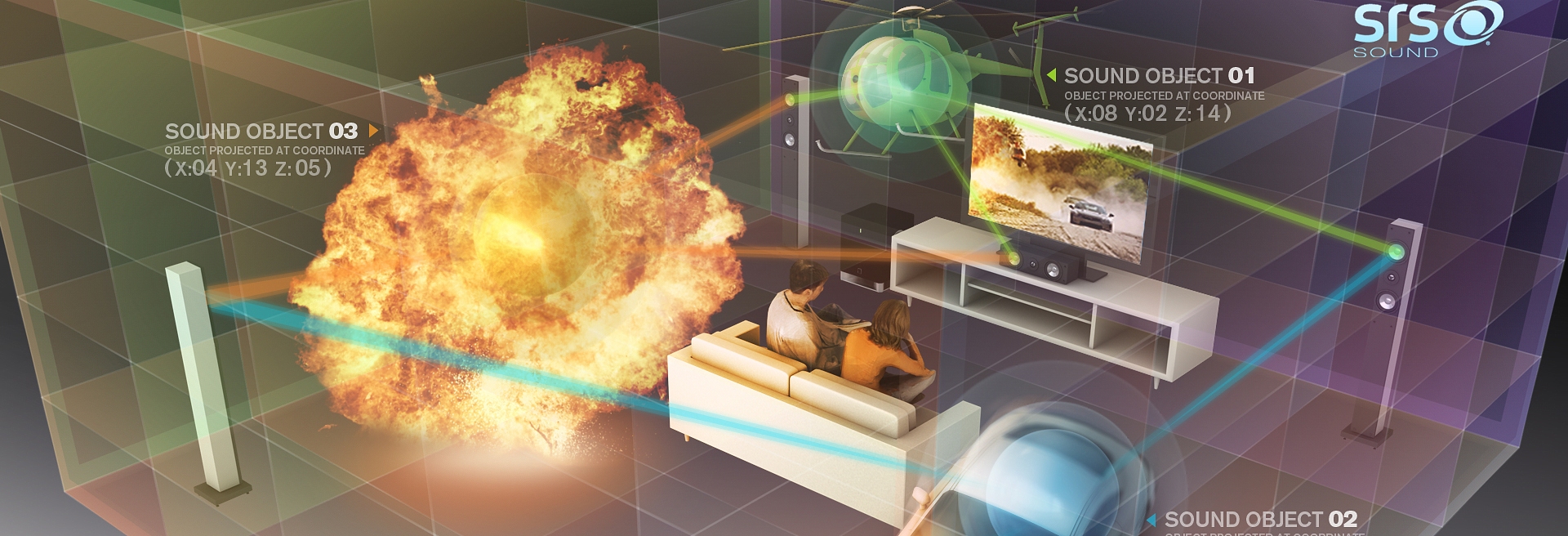

Multi-Directional Audio is an “object-based” sound format, much like the new Dolby Atmos theatrical format that I wrote about earlier this week. The basic concept behind this implementation of object-based sound is exactly the same as Atmos, so I’ll direct you back to that article rather than repeat myself here.

That being the case, what’s the difference between MDA and Atmos? According to Chief Technology Officer Alan Kraemer, he doesn’t necessarily see the products as being in competition, other than the fact that Dolby’s technology is proprietary in nature. SRS wants MDA to be an open standard that all object-based sound is created in, much as current multi-channel soundtracks are created in PCM format. Once an MDA soundtrack is completed by the sound mixers for a movie, TV show, videogame, etc., that MDA master could then be transcoded to any number of distribution formats, including Atmos. Kraemer says that MDA is, “Not a codec. It’s a language.” He describes it as codec-agnostic and open for compatibility. By standardizing one common mastering format, the industry could avoid a needless format war while at the same time fostering competition between companies.

Also, and perhaps of more interest to readers of this web site, SRS wants to make an aggressive push to bring MDA and object-based sound to the home market, whereas Dolby’s Atmos is strictly a theatrical format for the time being. The demonstration I received, which was held in a conference room in the Hilton Universal City hotel, was presented on an 11.1 speaker system that appeared to be consumer-grade gear, centered around an HDTV display. Don’t get me wrong, they were nice speakers (nicer than the ones I own), but the point is that this could feasibly be a home theater product once the details are worked out as far as how to integrate this processing into an A/V receiver. (The demo was played from a computer workstation.) Kraemer claims that SRS has been working with several CE manufacturers and other home audio players to make that happen, though he couldn’t reveal specifics or give any timeframes. (This recent SRS press release may offer a hint.)

The centerpiece of the demo was a one-minute short film that featured a helicopter, police sirens and other sound effects that swirled around the room. In fact, here’s the exact video (downgraded to basic stereo for YouTube, of course):

This was played first in 11.1, and then mapped down to 5.1 and 2.0 to demonstrate the format’s scalability and SRS’ virtual surround processing, which attempted to simulate the inactive sound channels.

I’ll be honest; I wasn’t wowed by the demo. Part of that may have been the venue. Again, this was in a hotel conference room. Over the two previous days, I had just sampled Dolby Atmos and some impressive DTS products in professional listening rooms. This wasn’t exactly a fair comparison. On the other hand, the MDA format did what object-based sound is supposed to do. Specific sounds in the clip deftly navigated through all channels, and a single soundtrack was adaptable for multiple output configurations. As a proof-of-concept demo, MDA works. Once SRS figures out how to integrate this with a delivery codec (or even multiple competing codecs) and get it into an A/V receiver, this could be a viable consumer product. And the things that Alan had to say about creating an open, codec-agnostic standard made a lot of sense to me. I would much prefer to see that happen than for Dolby, DTS and various other companies to all go head-to-head with proprietary object-based formats.

One question remains unanswered, however: From what source will viewers listen to MDA soundtracks in the home? Alan sounded doubtful that the Blu-ray spec would be revised to incorporate MDA or any other new sound formats. (He seems to be one of those people pessimistic about the future of physical media in general.) So what does that leave us with? Streaming media? Streaming would of course bring with it a whole lot of issues regarding compression and internet bandwidth that still need to be worked out. If that’s where this is headed, I’m not sure how long it will take to bring MDA to the home.

Before I left, Alan had one last, very intriguing demo that showcased some of the fascinating potential for MDA. While playing a recorded clip from a football game, he used a tablet computer with prototype software he called “MDA Director” to manipulate the soundtrack in real time. For example, he was able to isolate the color commentary voiceover and drag it from speaker to speaker around the room using the touch-screen interface, placing it wherever he wanted. If you’d like the announcers to sound like they’re sitting right beside you, that’s where they’ll be. Maybe you prefer them to come from the surround channels, or even overhead? No problem. He did the same with the sounds of cheerleaders and the stadium crowd, and then easily switched languages on-the-fly.

Obviously, when watching a movie, you probably don’t wish to mess around with the artistic sound design that the filmmakers want you to hear. However, this could be useful for audio commentary tracks or other supplemental features. Or, say you’re watching a concert film, and would like the sound to simulate the experience of sitting in the front row, and then move around to different seats in the venue. You can do that. The possibilities for this are very cool.

EM

What a week! It’s a wonder your ears didn’t fall off. Yes, this sounds like nifty technology. I would like to see it or something like it in physical media for the home, whether that’s Blu-ray or Déjà-vu-ray or whatever.

hurin

Will this have any chance of getting implemented? If you read the back covers of Bluray’s, it is clear that most discs have a 5.1 track even though 7.1 has been available for years.

Not much point in building a 11.1 setup if no studio will support it and all you got is demo material.

Josh Zyber

AuthorOne of the main points of object-based audio is that a movie in the future will only need to have one soundtrack, which will support as many channels as the theater or viewer wants to install. 5.1, 7.1, 11.1, 22.2, 63.1, whatever. It all comes from the same single sound mix that’s both backwards-compatible and future-proof.

The reason you don’t see many 7.1 soundtracks is that it requires an entirely separate sound mix from the 5.1 version. With limited support for 7.1 in theaters, many studios don’t see the point of it or want to pay for it. That’s not an issue with an object-based soundtrack. Whether a theater or home theater has an object-based playback system or just standard 5.1, the same soundtrack can be rendered out to either format with literally the push of a button.

Movies in the future will be mixed this way, because it just makes more sense for everyone. The old channel-based method will die off, just as mono has. The question is how long it will take to get it into the home.

If MDA were to be adopted on Blu-ray or some other format, any movie you bought with an MDA soundtrack could play back in 5.1 now. And if you upgraded your system to 22.2 in the future, the same soundtrack would support that too, without you needing to buy a new copy. It wouldn’t be upmixed. It would be the exact soundtrack that the original mixers want you to hear, played back through however many discrete channels your gear can support. Because the system is no longer about discrete channels. It’s about where in the room the sound of each object is supposed to come from. The speakers just facilitate that.

hurin

But the bluray player and the receiver would still need to support it, right? And unless this new object-based soundtrack is backwards compatible, discs will need two tracks, the old 5.1 and the new object-based.

I personally do not see the value of this. I own a high-end 4.0 setup. But if I had spend the money on 12 speakers instead, I would have gotten a mid-end 11.1 system. Thanks, but no thanks.

Josh Zyber

AuthorYes, both the source and receiver will need to support the new format, just as happened in the transition from lossy sound to lossless. And just as before, there will be some people who don’t want to change, which is why some form of backwards compatibility on the media will be necessary.

JM

It feels like we clearly know what the future will be, but it’s taking forever to get here.

EM

Apparently it’s cruising here using a consumer-grade jetpack.

William Henley

I like this. I think I am more excited about this than I am about Atmos. The biggest reason I haven’t gone with more speakers is that nothing supports it, and truthfully, when after I moved, I am currently just using 5.1, as so few soundtracks even support 7.1, and I was not happy with the way 5.1 stuff was being matrixed. This excites me – I can have however many speakers as I want, and place them whereever I want, and these new technologies will take advantage of it. I honestly cannot wait until these hit the markets (although I still want those DTS headphones).